Reality and myth in the quest for green computing

Got the Power

© Taffi, Fotolia

Klaus sorts out fact and fiction in the debate on saving power with some real-world tests.

As the computer magazines all too often report, computers are a big-time waste of energy, and a single Internet keyword search in a browser causes an unexpectedly high peak in power consumption for network components all over the planet, manifesting itself in the results page. Many experts, who are probably related to salesmen, will tell you the "industry standard solution" is to use an operating system that supports all the "advanced power management" features, which will make all electronic parts of your computer significantly more environmentally friendly. Once you start investigating this problem, however, you cannot help noticing that this optimistic assessment is (almost) totally wrong.

In addition, I am not even talking about the extra amount of energy going into producing extra controllers and circuits for improved power management. Perhaps you have heard that many photovoltaic cells require more energy in manufacturing them than they will ever get back from sunlight during their entire life cycle. This observation is not directly related to computers, but it begs the same point.

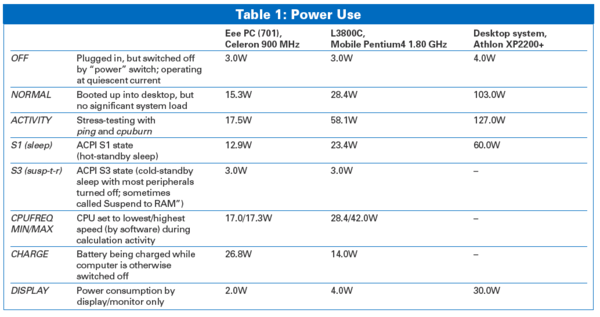

Most of the benefits in terms of power-saving effects that have been advertised for "intelligent" peripheral or mainboard features seem like a marketing gag once you actually measure the difference (see Table 1).

Power Tests

For my tests, I used three computers: an older Asus L3800C notebook, a new Eee PC model 701, and a medium-aged AMD Athlon XP 2200+ (Figure 1). To rule out any influence of battery charging or peripheral devices, I removed batteries from the notebooks and disconnected all external devices from the computers.

With the use of software controls to switch off internal components like WLAN, a built-in webcam, or a card reader, power consumption did not change significantly; therefore, it was not even mentioned in the results table.

The first surprise (if you were not already suspicious) is that even in the "switched off" state, all tested computers consume energy – all of them around 4 watts. And I am not talking about Suspend to RAM power here – really, no lights were blinking, and the computers had not been powered up since being connected to the power supply. This situation occurs because modern power supplies are never really physically disconnected, and they need some power for themselves to support handling the button on the front of the computer that says ON. Mechanical power switches that audibly "click" when you press them, thus physically separating or connecting to the electrical network, are considered antique today, and apart from that, they would not allow Resume from RAM, which is a topic I will examine later.

So, in all measurements, you have to take into account that up to 10 watts (for really well outfitted computers or monitors in standby mode) are just wasted away when the computer is actually OFF. At the cost of EUR 0.25 per kilowatt hour, this means that you pay around EUR 21.90 each year for a computer that just sits there and is not even switched on. (By the way, you might want to look at your TV's power consumption in standby mode now, not to mention coffee machines, … .)

To prevent this, you could, of course buy a switch like that shown in Figure 2, and some computer shops offer a master-to-slave socket that automatically turns peripheral devices on just when the device in the main socket is switched on. But then, these intelligent sockets still have a current of their own, which might or might not exceed that from the computer itself.

When I powered up the computers (batteries removed), power jumped to 103 watts for the desktop computer (133 watts when adding the TFT monitor), 28 watts for the L3800C notebook, and (the winner is) only 15.3 watts for the Eee PC. Once the computers were up and running Linux, power consumption went down by a few milliwatts because hard disk access had ended; besides, Linux activates some power management features of its own.

Running the computers for a year with this power consumption would cost you (at the rates mentioned above) about EUR 225.57 for the desktop, 61.32 for the L3800C, and 33.50 for the Eee PC. Although I cannot exactly tell you how much CO2 this produces, at least in comparison, you can imagine that the most power-hungry computer of the three is probably not justifying its performance of two times the speed of the Eee PC from the energy cost side.

Now you might have heard that "computing very hard" consumes more power than just staring at the screen and doing nothing, so I will test this theory by running a little stress test for the three candidates here.

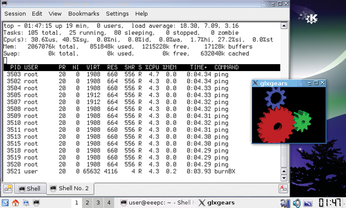

For checking throughput (and occasionally finding bad RAM when memcheck does not), one of my favorite tests to make a system really slow and waste resources, is

sudo ping -f localhost >/dev/null 2>&1 &

Each time you call this command, system load, as shown by the top utility (Figure 3), increases by about 1, so I start this 10 times to really give the computer something to do. Compiling a kernel with make -j 10 should do the same trick if you also want to check to see whether the hard disk is making a difference when reading and writing. Because my ping commands are causing more "internal traffic" on the system rather than stressing the CPU, I also start cpuburn (burnBX, burnP4, … depending on the parts of the CPU you want to heat up). Please be advised that cpuburn has the potential really to burn CPUs – at least if they are overclocked or insufficiently cooled (just a warning for the curious).

The results are not really exciting. The Eee PC consumes about 14 percent more power under heavy load and the desktop about 23 percent; the L3800C needs twice as much power now as it did in the idle state, which shows that its CPU is probably the main power consumer here, as opposed to the other two computers.

From the power-saving side, you can't do much about these power maximums and also run at maximum performance because the only choice you have is to compute "less fast," taking maybe twice the time at half the power. This can be done by telling the CPU to go to sleep every now and then between computing cycles, which has been supported by most CPUs since 2000. The Advanced Configuration and Power Interface (ACPI) does this, especially the user interface provided by the Linux kernel.

By entering

cat /proc/acpi/processor/CPU0/throttling

you can read which "computing delays" are supported (for the first CPU, you can find more CPUs or "cu cores" by increasing the number after CPU), which will look similar to this:

state count: 8

active state: T0

state available: T0 to T7

states:

*T0: 100%

T1: 87%

T2: 75%

T3: 62%

T4: 50%

T5: 37%

T6: 25%

T7: 12%If you enter, as root, the command

echo 4 >/proc/acpi/processor/CPU0/throttling

the CPU is only working "half-time" (or so it will feel), also resulting in less power consumption. The reason someone would want to do this is usually less of an ecological issue: The use of less power also keeps the CPU cooler and avoids a noisy fan or cooling device operating when a certain temperature has been reached.

By the way, you can switch off the CPU fan and activate "passive" cooling for many notebooks with the following code:

echo 1 >/proc/acpi/thermal_zone/THRM/cooling_mode

Usually, the ACPI will revert to active cooling and turn the fan on – regardless of this setting – whenever the CPU gets too hot. However, I cannot completely rule out that a particular ACPI BIOS might depend completely on the operating system for turning the fan back on, even at critical temperatures. Therefore, you should check twice for any reports of failed autocooling before taking the chance of losing your computer's CPU or motherboard in the hope of noise-free computing.

Scaling

Temperature goes up when the CPU produces more heat than it can dissipate, so in order to keep the temperature low, you can just avoid heat by "avoiding work." One way to achieve this is by passively (i.e., no fan) cooling the CPU, as described earlier. Another option is through frequency scaling. However, my desktop computer in the test did not support software frequency scaling, so I only tested this technique on the two notebooks.

Depending on the type of CPU, a special kernel cpufreq or clockmod module has to be loaded for different CPU types. For the Eee PC, this is

modprobe p4-clockmod

and for the L3800C, the generic acpi_cpufreq is sufficient. The command

cat /sys/devices/system/cpu/cpu0/cpufreq/scaling_available_frequencies

shows a variety of frequencies from 112,500 to 900,000 on the Eee PC, whereas the L3800C only has 1,800,000 and 1,200,000 available.

The command

cat /sys/devices/system/cpu/cpu0/cpufreq/scaling_cur_frequency

shows at what frequency the CPU is currently running. By echoing the desired minimum and maximum frequency to scaling_min_freq and scaling_max_freq, respectively, in the same directory, you can define the limits for automatic scaling (you have to be root to do this, of course).

The effect of scaling CPU frequency down, even to the very minimum on the Eee PC, drops power consumption by only 300 milliwatts – that is, a difference of only 2 percent compared with "maximum performance." This realization was the first that made me think that I must have forgotten something. But comparing the power consumption of idle vs. "heavy computing mode" on the Eee PC leads to the more likely assumption that the CPU is really not the main power consumer – in that specific notebook anyway.

The cpufreq governor is the scheme that determines whether saving power or improved performance is preferred for the CPU. The best compromise is usually the ondemand option:

echo "ondemand" > /sys/devices/system/cpu/cpu0/cpufreq/scaling_governor

The ondemand governor instructs the kernel to increase or decrease frequency on an as-needed basis. This behavior saves power in idle mode but provides maximum performance when the computer has something to work on. This particular approach gives better results mostly on the L3800C, for which the CPU is the main power factor.

Scaling the Eee PC

The Eee PC CPU's minimum frequency of 112,500 hertz reported by the p4-clockmod module is quite low and will morph the desktop into something almost unusable, with its slower than molasses performance, as soon as the kernel automatically scales down the frequency because it has nothing to do. Therefore, I use half of the Eee PC processor's "maximum" frequency as a minimum instead:

echo 450000 > /sys/devices/system/cpu/cpu0/cpufreq/scaling_min_frequency

which is sufficient to run the 3D desktop Compiz Fusion smoothly, even when the computer is idle and, therefore, using a scaled-down CPU frequency.

Monitors

Switching the computer's monitor or display off during a pause in processing also can save energy. In my tests, the 19-inch TFT connected to the desktop Athlon system saved 30 watts of power – when the monitor was completely turned off. For the Eee PC notebook, blanking the display (i.e., switching off the background light) saved only 1 watt; however, on the L3800C notebook computer, the savings were about 3 watts, which can extend the battery life by a few minutes. However, this was about the only peripheral device with a noticeable effect on power.

An interesting design feature related to power savings and efficiency is the display on the "One Laptop per Child" OLPC-XO1. When operating in bright sunlight, this computer does not increase power to the backlight, as a typical notebook necessarily would do. Instead, the OLPC computer has a reflective coating built in to the display so that the sunlight works toward amplifying the display contrast. In this way, all graphics and text can be read very well in the difficult conditions of bright sunlight while saving battery power in the bargain. Unfortunately, I have not seen this feature in any standard notebook computers yet.

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

TUXEDO Computers Unveils Linux Laptop Featuring AMD Ryzen CPU

This latest release is the first laptop to include the new CPU from Ryzen and Linux preinstalled.

-

XZ Gets the All-Clear

The back door xz vulnerability has been officially reverted for Fedora 40 and versions 38 and 39 were never affected.

-

Canonical Collaborates with Qualcomm on New Venture

This new joint effort is geared toward bringing Ubuntu and Ubuntu Core to Qualcomm-powered devices.

-

Kodi 21.0 Open-Source Entertainment Hub Released

After a year of development, the award-winning Kodi cross-platform, media center software is now available with many new additions and improvements.

-

Linux Usage Increases in Two Key Areas

If market share is your thing, you'll be happy to know that Linux is on the rise in two areas that, if they keep climbing, could have serious meaning for Linux's future.

-

Vulnerability Discovered in xz Libraries

An urgent alert for Fedora 40 has been posted and users should pay attention.

-

Canonical Bumps LTS Support to 12 years

If you're worried that your Ubuntu LTS release won't be supported long enough to last, Canonical has a surprise for you in the form of 12 years of security coverage.

-

Fedora 40 Beta Released Soon

With the official release of Fedora 40 coming in April, it's almost time to download the beta and see what's new.

-

New Pentesting Distribution to Compete with Kali Linux

SnoopGod is now available for your testing needs

-

Juno Computers Launches Another Linux Laptop

If you're looking for a powerhouse laptop that runs Ubuntu, the Juno Computers Neptune 17 v6 should be on your radar.