A home-built virtual RAID with ATA over Ethernet

Raiding the Net

© Lead Image © Kheng Ho Toh, 123RF.com

We'll show you how to build a network-based virtual RAID solution using ATA over Ethernet.

ATA over Ethernet (AoE) is a protocol for accessing media via Ethernet. A remote client can access the device directly through ATA commands, which the protocol software encapsulates, transmits, and disassembles at the other end (Figure 1). AoE is often used for building low-budget Storage Area Network (SAN) devices. An interesting feature of AoE is that it lets you combine remote disks on different systems into a network-based RAID array. In this article, I describe how to set up AoE and use it to create your own network RAID system.

Figure 1: RAID devices over the network with ATA Ethernet follow a pattern similar to iSCSI, with initiators and targets.

Figure 1: RAID devices over the network with ATA Ethernet follow a pattern similar to iSCSI, with initiators and targets.

Required Components

The exact specifications for the AoE protocol are available online [1]: In a style similar to iSCSI, the literature refers to the system that provides the physical disks as a target. The initiator is the system that integrates the disk.

AoE is very easy to set up on most Linux systems. On the target, you just need to install the vblade package; on Debian systems, this is a painless procedure using:

aptitude install vblade

Running the install on the initiator is just as easy, but this time you need the AoE tools (aptitude install aoe-tools). You now have the aoe kernel module, which you can load with modprobe aoe. If you want to load this module by default at startup, simply add a corresponding entry to your /etc/modules file.

Providing Media

The vblade program lets you manage disks and partitions. You'll need to enter parameters for a shelf number, a slot number, and the network interface to serve up the storage, as well as partition and volume details. You can also provide a file (e.g., a raw image). The shelf and slot number, or their combination, must be unique on the network.

In addition to buffer settings and the access mode, you can specify MAC addresses as a comma-separated list. This list acts as a whitelist: Only computers with the specified MAC addresses can access the data.

For testing purposes, it makes sense to use a raw image. The following command generates a 10GB image:

dd if=/dev/zero of=/test/raw_image.raw bs=1M count=10000

If you prefer to try this with real data carriers, instead of the image (/test/raw_image.raw), simply insert the device name (e.g., /dev/sdc1 for the first partition on the hard disk integrated as sdc).

Shelves and Slots

To share the newly created raw image on the target as Shelf 2, Slot 1, simply type:

vblade 2 1 eth0 /test/raw_image.raw

If all requirements are met on the initiator system, the disk will be visible immediately. If all the requirements are not met, you might need to run the aoe-discover command. To see the media available to the system, you can run,

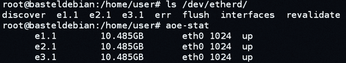

ls /dev/etherd

although you'll get more detailed output if you enter aoe-stat (Figure 2).

Using vblade is fine for test purposes; you will probably want to run the program in the foreground to see a lot of debug information (which you can abort with Ctrl+C). The corresponding background service is vbladed. In both cases, the data you provided disappears once again after a reboot.

If you want to automate the integration of initiators and targets, the tool you need is vblade-persist. However, the program is part of separate package and first needs to be installed with:

aptitude install vblade-persist

To provide the raw image used above permanently, it first needs a persistent export:

vblade-persist setup 2 1 eth0 /test/raw_image.raw

Absolute paths are used for the disk or file. Select the device with vblade-persist auto 2 1 to launch automatically. To deploy immediately without a restart, you can enter: vblade-persist start 2 1. For an overview of all the persistent AoE exports and their states, simply call vblade-persist ls.

Network RAID

If you have multiple computers that already run 24/7 on your own network, and if they happen to have unused capacity, you might want to serve up these resources to other PCs. If you take this idea a step farther, you end up with something like a RAID system whose storage you can quickly provide from a central location. Because multiple computers are involved, there are some trade-offs in terms of probability of failure, but the choice of an appropriate RAID level takes the bite out of this potential explosiveness.

The following example creates a RAID 5 array for simplicity's sake. In the first step, the admin creates a raw image on each of three systems (Figures 1 and 2). The RAID array is easily generated with the mdadm command (Listing 1). On the newly created RAID array, you can then create, for example, an ext4 filesystem:

mkfs.ext4 -L nwraid /dev/md0

Listing 1

mdadm: RAID Array

# mdadm --create /dev/md0 --auto md --level=5 --raid-devices=3 /dev/etherd/e1.1 /dev/etherd/e2.1 /dev/etherd/e3.1 mdadm: Defaulting to version 1.2 metadata mdadm: array /dev/md0 started.

To revive the RAID array on another system, in case of initiator failure, without any great loss of time, you will want to create a matching mdadm configuration file (mdadm.conf) and store it in a safe place (Listing 2).

Listing 2

mdadm.conf

If you are game for an adventure, you can use Btrfs instead of mdadm; after all, it is much simpler to handle than mdadm, and it offers more options – such as inline compression or snapshots. Listing 3 shows how easy it is to set up a RAID array with Btrfs.

Listing 3

Btrfs: RAID Array

# mkfs.btrfs -d raid5 -m raid5 -L nwraid /dev/etherd/e1.1 /dev/etherd/e2.1 /dev/etherd/e3.1

WARNING! - Btrfs v0.20-rc1 IS EXPERIMENTAL

WARNING! - see http://btrfs.wiki.kernel.org before using

adding device /dev/etherd/e2.1 id 2

adding device /dev/etherd/e3.1 id 3

Setting RAID5/6 feature flag

fs created label nwraid on /dev/etherd/e1.1

nodesize 4096 leafsize 4096 sectorsize 4096 size 29.30GB

Btrfs v0.20-rc1

Backup and Virtualization

If you frequently need to create disk backups over the network (as is often the case, e.g., in data forensics), you will really enjoy working with AoE: Providing a data carrier over the network is a much faster process compared with iSCSI.

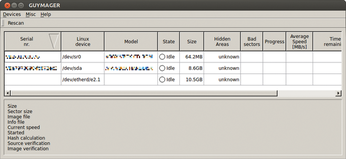

AoE also is much more convenient compared with a backup using Netcat in conjunction with dd or ewfacquire, and forensic scientists will love the fact that the popular backup program Guymager [2] instantly identifies AoE devices (Figure 3).

Figure 3: The forensic tool Guymager recognizes AoE drives at once and supports fast saving of whole data storage devices over the network.

Figure 3: The forensic tool Guymager recognizes AoE drives at once and supports fast saving of whole data storage devices over the network.

The joy is clouded a little in that vblade is currently only included with Debian, on the Grml Live CD [3], and in Caine [4] version 5. Users with other distributions need to turn to SourceForge [5]. In response to my inquiries to the major projects, only DEFT Linux [6] responded with a promise to add vblade in the next release.

Virtualizing Seized Systems

AoE is a useful tool for virtualization purposes. In conjunction with xmount [7], admins can quickly serve up a secure image as a virtual disk (Listing 4). Forensic scientists who want to deploy a disk image in Encase format with write support should first convert it to a raw image and then embed the raw file as a data carrier.

Listing 4

xmount

# xmount --in ewf --out dd --cache file.ovl diskimage.e?? /mnt/analysis # vblade 2 3 eth0 /mnt/analysis/diskimage.dd

On a remote computer, the system can be virtualized with qemu-kvm, for example. In the simplest possible case, you would enter

qemu-kvm -m 1024 -hda /dev/etherd/e2.3

to do deliberately without possible optimizations and not make any changes to the host system for the new environment but output much clearer error messages.

Failure of Target or Initiator

If one target fails, this is basically nothing more than the failure of a hard disk in a normal RAID array. You only need to replace the defective unit with a fully functional one, and you can replace a physical hard disk with a raw image on a different machine, for example.

The new disk just needs to be the same size as the original; you can then share it via vblade-persist. For example, if you want to remove the failed device connected to e2.1, you would simply issue the following command;

mdadm /dev/md0 --remove /dev/etherd/e2.1

then add the new device (e4.1 in this example) to the RAID array:

mdadm /dev/md0 --add /dev/etherd/e4.1

In the background, the software will now start the rebuild process, which may take several hours to complete depending on the size of the system.

However, if an initiator fails, the array simply can be rebuilt on a new system. In this case, you can restore the previously saved configuration or run mdadm directly:

mdadm --assemble /dev/md0 /dev/etherd/e1.1 /dev/etherd/e2.1 /dev/etherd/e3.1

In this case, the command restores the RAID on another system.

Conclusions

ATA over Ethernet is a promising protocol that greatly simplifies many tasks. Used correctly, AoE facilitates the work of administrators and forensic experts; in many cases, you can even virtualize RAID structures.

Infos

- AoE standard: http://support.coraid.com/documents/AoEr11.txt

- Guymager: http://guymager.sf.net

- Grml: http://www.grml.org

- Caine: http://www.caine-live.net

- AoE tools on SourceForge: http://aoetools.sourceforge.net

- DEFT Linux: http://www.deftlinux.net

- xmount homepage: http://www.pinguin.lu