In statistical computations, intuition can be very misleading

Guess Again

Even hardened scientists can make mistakes when interpreting statistics. Mathematical experiments can give you the right ideas to prevent this from happening, and quick simulations in Perl nicely illustrate and support the learning process.

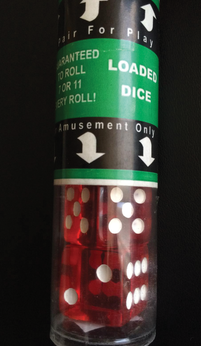

If you hand somebody a die in a game of Ludo [1], and they throw a one on each of their first three turns, they are likely to become suspicious and check the sides of the die. That's just relying on intuition – but when can you scientifically demonstrate that the dice are loaded (Figure 1)? After five throws that all come up as ones? After ten throws?

Each experiment with dice is a game of probabilities. What exactly happens is a product of chance. It is not so much the results of a single throw that are relevant, but the tendency. A player could throw a one, three times in succession from pure bad luck. Although the odds are pretty low, it still happens, and you would be ill advised to jump to conclusions about the dice based on such a small number of attempts.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Latest Linux Kernel RC Contains Changes Galore

Linux kernel 7.0-rc3 includes more changes than have been made in a single release in recent history.

-

Nitrux 6.0 Now Ready to Rock Your World

The latest iteration of the Debian-based distribution includes all kinds of newness.

-

Linux Foundation Reports that Open Source Delivers Better ROI

In a report that may surprise no one in the Linux community, the Linux Foundation found that businesses are finding a 5X return on investment with open source software.

-

Keep Android Open

Google has announced that, soon, anyone looking to develop Android apps will have to first register centrally with Google.

-

Kernel 7.0 Now in Testing

Linus Torvalds has announced the first Release Candidate (RC) for the 7.x kernel is available for those who want to test it.

-

Introducing matrixOS, an Immutable Gentoo-Based Linux Distro

It was only a matter of time before a developer decided one of the most challenging Linux distributions needed to be immutable.

-

Chaos Comes to KDE in KaOS

KaOS devs are making a major change to the distribution, and it all comes down to one system.

-

New Linux Botnet Discovered

The SSHStalker botnet uses IRC C2 to control systems via legacy Linux kernel exploits.

-

The Next Linux Kernel Turns 7.0

Linus Torvalds has announced that after Linux kernel 6.19, we'll finally reach the 7.0 iteration stage.

-

Linux From Scratch Drops SysVinit Support

LFS will no longer support SysVinit.