Simple web scraping with Bash

Ski Report

© Photo by Nicolai Berntsen on Unsplash

With one line of Bash code, Pete scrapes the web and builds a desktop notification app to get the daily snow report.

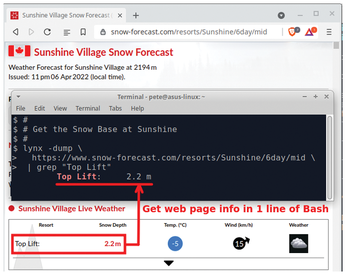

While recently doing a small project, I was amazed by how much web scraping I could do with just one line of Bash. I used the text-based Lynx browser [1] and then piped the output to a grep search. Figure 1 shows the one-line Bash example that scrapes the current snow depth from the Sunshine Village Snow Forecast web page.

In this article, I will introduce some techniques to easily scrape web pages, and then I will create a desktop notification script that provides the daily snow forecast.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

KDE Linux Drops AUR

KDE Linux developers have dropped the Arch User Repository from the build pipeline due to security concerns; other distributions should consider doing the same.

-

California May Exempt Linux from Its Age-Verification Law

After backlash from the Linux community, California may be backing off on its promise to force all operating systems to verify age, but one platform may still have to comply.

-

Another Logic Bug Found in Linux Kernel

Qualys has discovered a vulnerability in the Linux kernel that can be used to elevate standard user privileges.

-

Ubuntu Core 26 Offers Game-Changing Enterprise Features

Ubuntu Core 26 could be a game-changer for organizations looking for increased security and reliability.

-

AI Flooding the Linux Kernel Security Mailing List

AI is giving Linus Torvalds a headache, but not in the way you might think.

-

Top Priorities for Open Source Pros Seeking a New Job

Professional fulfillment tops the list, according to LPI report.

-

Container-Based Fedora Hummingbird Designed for Agent-First Builders

Fedora Hummingbird brings the same approach to the host OS as it does to containers to level up security.

-

Linux kernel Developers Considering a Kill Switch

With the rise of Linux vulnerabilities, the kernel developers are now considering adding a component that could help temporarily mitigate against them… in the form of a kill switch.

-

Fedora 44 Now Gaming Ready

The latest version of Fedora has been released with gaming support.

-

Manjaro 26.1 Preview Unveils New Features

The latest Manjaro 26.1 preview has been released with new desktop versions, a new kernel, and more.