Bringing Up Clouds

Core Technology

VM instances in the cloud are different beasts, even if they start off as a single image. Discover how they get their configuration in this month's Core Technologies.

First as a buzzword and then as a commodity, the cloud lives the typical life of an IT industry phenomenon. This means that running something (but usually Linux) in a cloud is a thing you now do more often than not. From a user perspective, it's simple: You click a button on the cloud provider's dashboard and get your virtual machine (VM) running within a minute.

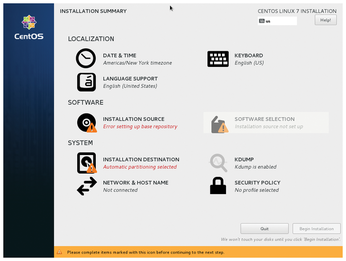

This is drastically different from what you do on your desktop. Here, you insert the DVD or plug in a USB pen drive and spawn the installer. Be it an old-school, text-based or a slick GUI installer, it typically asks you some questions (Figure 1). Which locale do you want to use? What's your computer's hostname? What's your time zone? How do you want your user account named? Which password do you want to use? You may not even notice these questions, because installation takes a quarter of an hour or more, and you spend most of this time sipping coffee or chatting with friends. Yet these questions are essential for the system's operation. Without a password, you won't be able to log in. Or, even worse, everyone will be able to.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Ubuntu Core 26 Offers Game-Changing Enterprise Features

Ubuntu Core 26 could be a game-changer for organizations looking for increased security and reliability.

-

AI Flooding the Linux Kernel Security Mailing List

AI is giving Linus Torvalds a headache, but not in the way you might think.

-

Top Priorities for Open Source Pros Seeking a New Job

Professional fulfillment tops the list, according to LPI report.

-

Container-Based Fedora Hummingbird Designed for Agent-First Builders

Fedora Hummingbird brings the same approach to the host OS as it does to containers to level up security.

-

Linux kernel Developers Considering a Kill Switch

With the rise of Linux vulnerabilities, the kernel developers are now considering adding a component that could help temporarily mitigate against them… in the form of a kill switch.

-

Fedora 44 Now Gaming Ready

The latest version of Fedora has been released with gaming support.

-

Manjaro 26.1 Preview Unveils New Features

The latest Manjaro 26.1 preview has been released with new desktop versions, a new kernel, and more.

-

Microsoft Issues Warning About Linux Vulnerability

The company behind Windows has released information about a flaw that affects millions of Linux systems.

-

Is AI Coming to Your Ubuntu Desktop?

According to the VP of Engineering at Canonical, AI could soon be added to the Ubuntu desktop distribution.

-

Framework Laptop 13 Pro Competes with the Best

Framework has released what might be considered the MacBook of Linux devices.