Process Tracing

Core Technology

Ever wondered what processes are currently doing on your system? Linux has a capable mechanism to answer your questions.

Processes are, in general, units of isolation within a Unix system. This perhaps is the most important abstraction the kernel provides, because it implies that malicious or badly written programs can never affect proper ones. Isolation is the foundation of safety, but sometimes you want to turn it off.

Think of the interactive GNU Debugger (GDB) (Figure 1). You'd certainly want it to stop your code execution at specified points or execute it step-by-step, and it is hardly useful if it can't add watches or otherwise peek into the program being debugged; however, the debugger and the program it debugs are two different, isolated processes, so how could it ever happen?

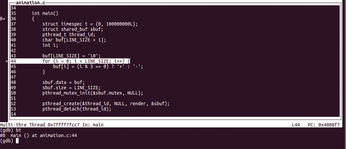

Figure 1: GDB comes with an interactive text-mode user interface, too. To enter or leave, type C-x C-a (TUI key bindings [1]).

Figure 1: GDB comes with an interactive text-mode user interface, too. To enter or leave, type C-x C-a (TUI key bindings [1]).

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Ubuntu Core 26 Offers Game-Changing Enterprise Features

Ubuntu Core 26 could be a game-changer for organizations looking for increased security and reliability.

-

AI Flooding the Linux Kernel Security Mailing List

AI is giving Linus Torvalds a headache, but not in the way you might think.

-

Top Priorities for Open Source Pros Seeking a New Job

Professional fulfillment tops the list, according to LPI report.

-

Container-Based Fedora Hummingbird Designed for Agent-First Builders

Fedora Hummingbird brings the same approach to the host OS as it does to containers to level up security.

-

Linux kernel Developers Considering a Kill Switch

With the rise of Linux vulnerabilities, the kernel developers are now considering adding a component that could help temporarily mitigate against them… in the form of a kill switch.

-

Fedora 44 Now Gaming Ready

The latest version of Fedora has been released with gaming support.

-

Manjaro 26.1 Preview Unveils New Features

The latest Manjaro 26.1 preview has been released with new desktop versions, a new kernel, and more.

-

Microsoft Issues Warning About Linux Vulnerability

The company behind Windows has released information about a flaw that affects millions of Linux systems.

-

Is AI Coming to Your Ubuntu Desktop?

According to the VP of Engineering at Canonical, AI could soon be added to the Ubuntu desktop distribution.

-

Framework Laptop 13 Pro Competes with the Best

Framework has released what might be considered the MacBook of Linux devices.