Filtering log messages with Splunk

To analyze massive amounts of log data from very different sources, you need a correspondingly powerful tool. It needs to bring together text messages from web and application servers, network routers, and other systems, while also supporting fast indexing and querying.

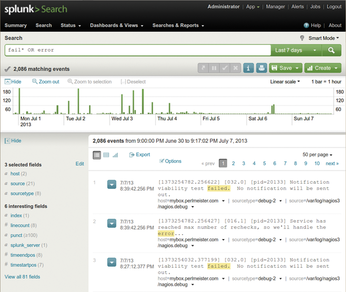

The commercial Splunk tool [1] has demonstrated its skills in this field even in the data centers of large Internet companies, but the basic version is freely available for home use on standard Linux platforms. After the installation, splunk start launches the daemon and the web interface, where users can configure the system and dispatch queries, as on an Internet search engine (Figure 1).

Figure 1: Following a search command, Splunk presents the errors recorded in all real-time-imported logs.

Figure 1: Following a search command, Splunk presents the errors recorded in all real-time-imported logs.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Is AI Coming to Your Ubuntu Desktop?

According to the VP of Engineering at Canonical, AI could soon be added to the Ubuntu desktop distribution.

-

Framework Laptop 13 Pro Competes with the Best

Framework has released what might be considered the MacBook of Linux devices.

-

The Latest CachyOS Features Supercharged Kernel

The latest release of CachyOS brings with it an enhanced version of the latest Linux kernel.

-

Kernel 7.0 Is a Bit More Rusty

Linux kernel 7.0 has been released for general availability, with Rust finally getting its due.

-

France Says "Au Revoir" to Microsoft

In a move that should surprise no one, France announced plans to reduce its reliance on US technology, and Microsoft Windows is the first to get the boot.

-

CIQ Releases Compatibility Catalog for Rocky Linux

The company behind Rocky Linux is making an open catalog available to developers, hobbyists, and other contributors, so they can verify and publish compatibility with the CIQ lineup.

-

KDE Gets Some Resuscitation

KDE is bringing back two themes that vanished a few years ago, putting a bit more air under its wings.

-

Ubuntu 26.04 Beta Arrives with Some Surprises

Ubuntu 26.04 is almost here, but the beta version has been released, and it might surprise some people.

-

Ubuntu MATE Dev Leaving After 12 years

Martin Wimpress, the maintainer of Ubuntu MATE, is now searching for his successor. Are you the next in line?

-

Kali Linux Waxes Nostalgic with BackTrack Mode

For those who've used Kali Linux since its inception, the changes with the new release are sure to put a smile on your face.