Secure storage with GlusterFS

Bright Idea

You can create distributed, replicated, and high-performance storage systems using GlusterFS and some inexpensive hardware. Kurt explains.

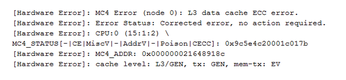

Recently I had a CPU cache memory error pop up in my file server logfile (Figure 1). I don't know if that means the CPU is failing, or if it got hit by a cosmic ray, or if something else happened, but now I wonder: Can I really trust this hardware with critical services anymore? When it comes to servers, the failure of a single component, or even a single system, should not take out an entire service.

In other words, I'm doing it wrong by relying on a single server to provide my file-serving needs. Even though I have the disks configured in a mirrored RAID array, this won't help if the CPU goes flaky and dies; I'll still have to build a new server, and move the drives over, and hope that no data was corrupted. Now imagine that this isn't my personal file server but the back-end file server for your system boot images and partitions (because you're using KVM, RHEV, OpenStack, or something similar). In this case, the failure of a single server could bring a significant portion of your infrastructure to its knees. Thus, the availability aspect of the security triad (i.e., Availability, Integrity, and Confidentiality, or AIC) was not properly addressed, and now you have to deal with a lot of angry users and managers.

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Ubuntu 26.04 Beta Arrives with Some Surprises

Ubuntu 26.04 is almost here, but the beta version has been released, and it might surprise some people.

-

Ubuntu MATE Dev Leaving After 12 years

Martin Wimpress, the maintainer of Ubuntu MATE, is now searching for his successor. Are you the next in line?

-

Kali Linux Waxes Nostalgic with BackTrack Mode

For those who've used Kali Linux since its inception, the changes with the new release are sure to put a smile on your face.

-

Gnome 50 Smooths Out NVIDIA GPU Issues

Gamers rejoice, your favorite pastime just got better with Gnome 50 and NVIDIA GPUs.

-

System76 Retools Thelio Desktop

The new Thelio Mira has landed with improved performance, repairability, and front-facing ports alongside a high-quality tempered glass facade.

-

Some Linux Distros Skirt Age Verification Laws

After California introduced an age verification law recently, open source operating system developers have had to get creative with how they deal with it.

-

UN Creates Open Source Portal

In a quest to strengthen open source collaboration, the United Nations Office of Information and Communications Technology has created a new portal.

-

Latest Linux Kernel RC Contains Changes Galore

Linux kernel 7.0-rc3 includes more changes than have been made in a single release in recent history.

-

Nitrux 6.0 Now Ready to Rock Your World

The latest iteration of the Debian-based distribution includes all kinds of newness.

-

Linux Foundation Reports that Open Source Delivers Better ROI

In a report that may surprise no one in the Linux community, the Linux Foundation found that businesses are finding a 5X return on investment with open source software.