Backdoors in Machine Learning Models

Miseducation

Machine learning can be maliciously manipulated – we'll show you how.

Interest in machine learning has grown incredibly quickly over the past 20 years due to major advances in speech recognition and automatic text translation. Recent developments (such as generating text and images, as well as solving mathematical problems) have shown the potential of learning systems. Because of these advances, machine learning is also increasingly used in safety-critical applications. In autonomous driving, for example, or in access systems that evaluate biometric characteristics. Machine learning is never error-free, however, and wrong decisions can sometimes lead to life-threatening situations. The limitations of machine learning are very well known and are usually taken into account when developing and integrating machine learning models. For a long time, however, less attention has been paid to what happens when someone tries to manipulate the model intentionally.

Adversarial Examples

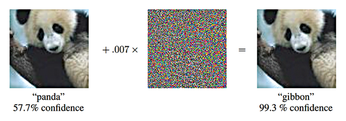

Experts have raised the alarm about the possibility of adversarial examples [1] – specifically manipulated images that can fool even state-of-the-art image recognition systems (Figure 1). In the most dangerous case, people cannot even perceive a difference between the adversarial example and the original image from which it was computed. The model correctly identifies the original, but it fails to correctly classify the adversial example. Even the category in which you want the adversial example to be erroneously classified can be predetermined. Developments [2] in adversarial examples have shown that you can also manipulate the texture of objects in our reality such that a model misclassifies the manipulated objects – even when viewed from different directions and distances.

Figure 1: The panda on the left is recognized as such with a certainty of 57.7 percent. Adding a certain amount of noise (center) creates the adversarial example. Now the animal is classified as a gibbon [3]. © arXiv preprint arXiv:1412.6572 (2014)

Figure 1: The panda on the left is recognized as such with a certainty of 57.7 percent. Adding a certain amount of noise (center) creates the adversarial example. Now the animal is classified as a gibbon [3]. © arXiv preprint arXiv:1412.6572 (2014)

[...]

Buy this article as PDF

(incl. VAT)

Buy Linux Magazine

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Subscribe to our ADMIN Newsletters

Support Our Work

Linux Magazine content is made possible with support from readers like you. Please consider contributing when you’ve found an article to be beneficial.

News

-

Container-Based Fedora Hummingbird Designed for Agent-First Builders

Fedora Hummingbird brings the same approach to the host OS as it does to containers to level up security.

-

Linux kernel Developers Considering a Kill Switch

With the rise of Linux vulnerabilities, the kernel developers are now considering adding a component that could help temporarily mitigate against them… in the form of a kill switch.

-

Fedora 44 Now Gaming Ready

The latest version of Fedora has been released with gaming support.

-

Manjaro 26.1 Preview Unveils New Features

The latest Manjaro 26.1 preview has been released with new desktop versions, a new kernel, and more.

-

Microsoft Issues Warning About Linux Vulnerability

The company behind Windows has released information about a flaw that affects millions of Linux systems.

-

Is AI Coming to Your Ubuntu Desktop?

According to the VP of Engineering at Canonical, AI could soon be added to the Ubuntu desktop distribution.

-

Framework Laptop 13 Pro Competes with the Best

Framework has released what might be considered the MacBook of Linux devices.

-

The Latest CachyOS Features Supercharged Kernel

The latest release of CachyOS brings with it an enhanced version of the latest Linux kernel.

-

Kernel 7.0 Is a Bit More Rusty

Linux kernel 7.0 has been released for general availability, with Rust finally getting its due.

-

France Says "Au Revoir" to Microsoft

In a move that should surprise no one, France announced plans to reduce its reliance on US technology, and Microsoft Windows is the first to get the boot.